Back to Projects

AI AgentsProductivity AutomationSolo BuildMCP Protocol

SmithClaw

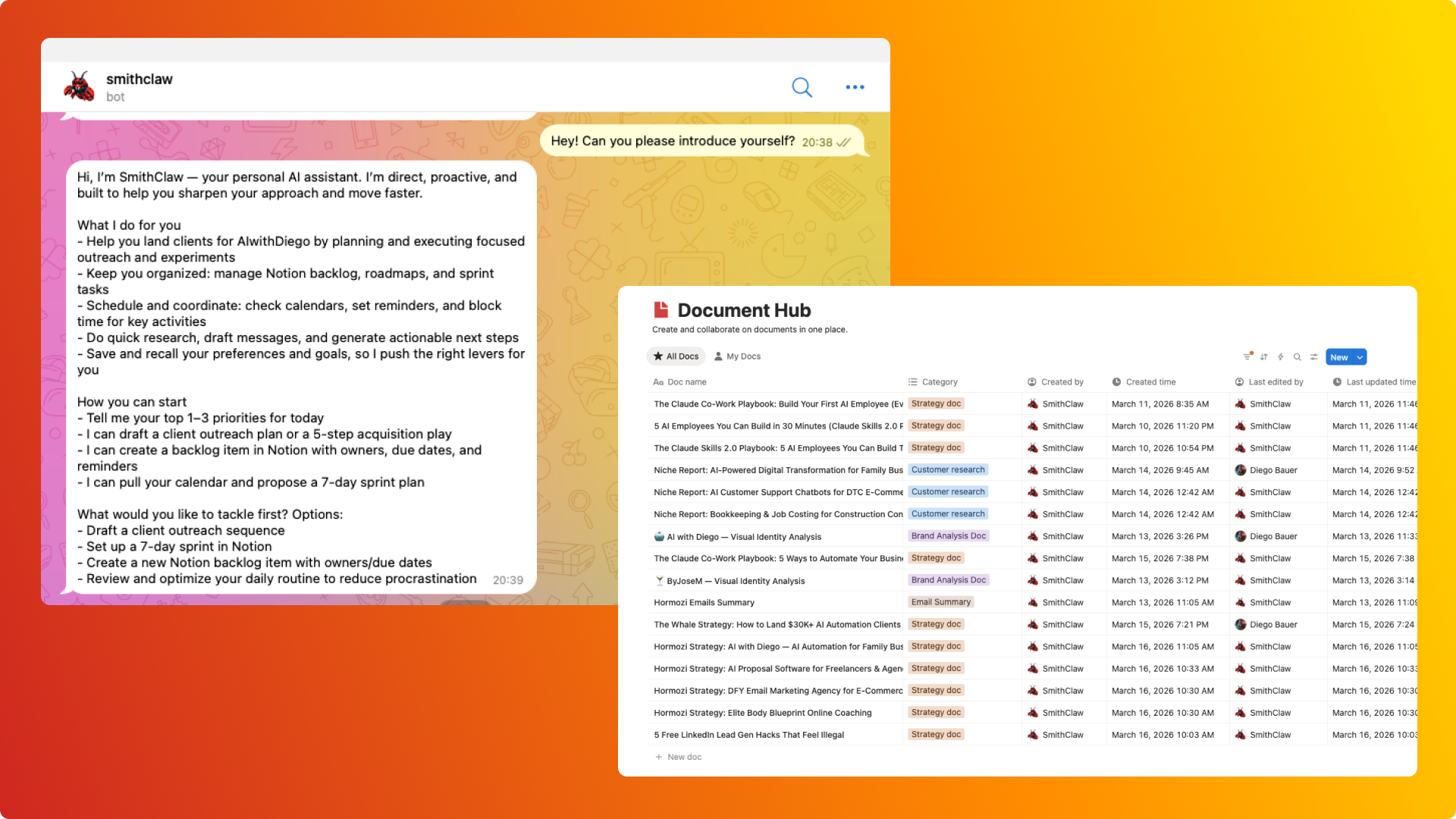

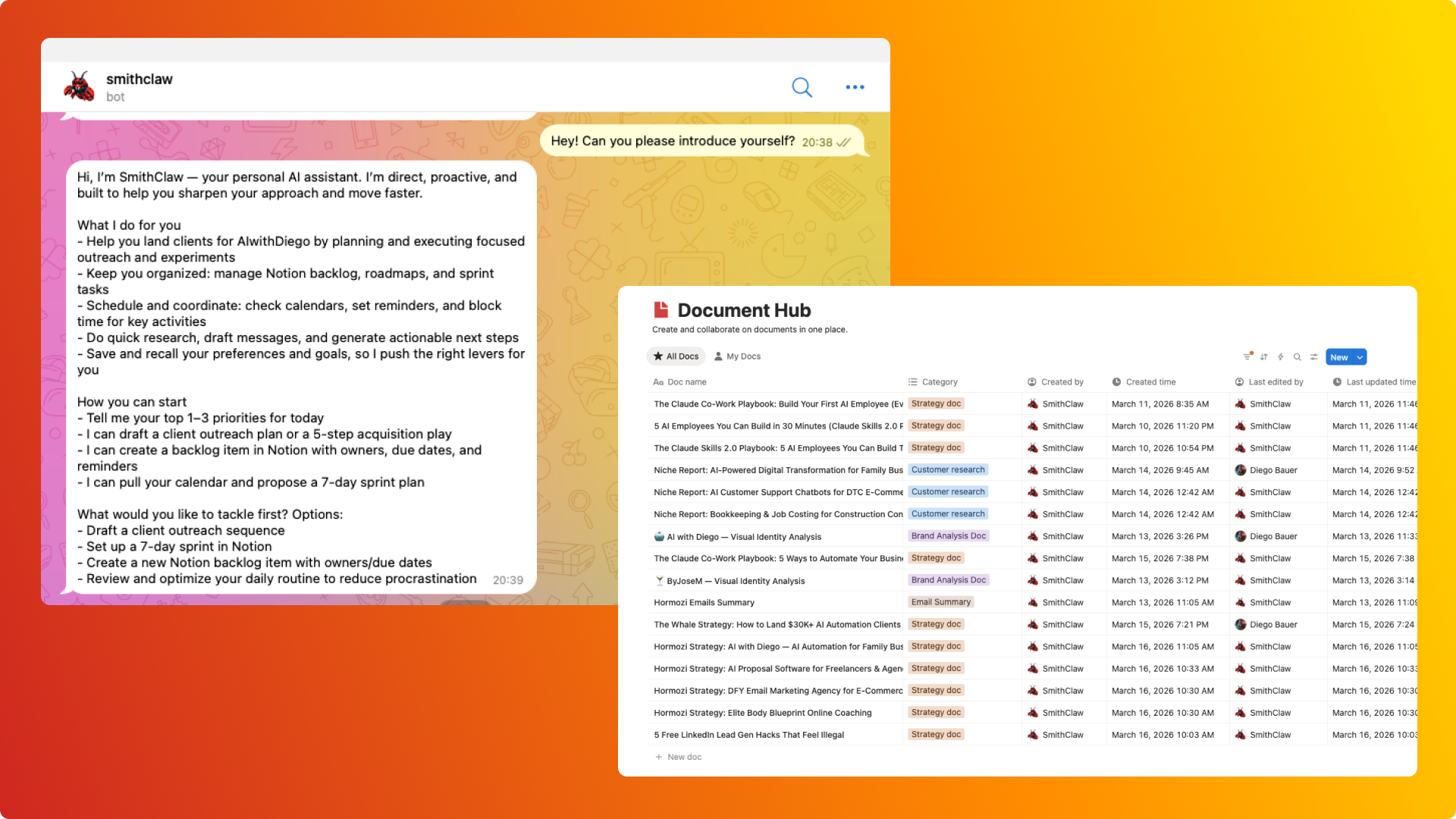

Self-hosted personal AI agent living inside Telegram that connects to Notion, Gmail, Google Calendar, and the web — handling research, document creation, memory, and scheduled automation through a single conversational interface. Built in three weeks as a secure, privacy-first alternative to emerging open-source agent frameworks, featuring a 5-tier memory system with vector embeddings (Supabase pgvector), proactive daily briefings, voice I/O, and multi-agent mesh workflows.

The Problem

In early 2026, open-source AI agent frameworks promised autonomous assistants but came with critical flaws: most required broad system access with minimal sandboxing (security risks), cloud-hosted solutions meant personal data lived on someone else's infrastructure (no ownership), and tools were generic by design — they couldn't learn preferences, connect to a specific workspace, or adapt to how someone actually works. There was no way to get the benefits of an autonomous AI agent — research, automation, document creation, memory — without compromising on security, privacy, or personalization.

Key Metrics

24+ emails in <2 mins

Email synthesis

17+ integrated tools

Tools shipped

5-tier vector system

Memory architecture

3 daily touchpoints

Proactive intelligence

My Role & Approach

Analyzed emerging agent framework architectures (OpenAI Operator, open-source clones) and identified security gaps. Chose Telegram as the interface for zero adoption friction — already in daily use, rich enough for markdown/voice/status updates, no frontend to build. Built a self-hosted architecture for full data ownership. Implemented a 5-tier memory system: chat history (SQLite), context pruning (in-memory), soul identity (markdown), knowledge graph (SQLite entity-relationship), and vector embeddings (Supabase pgvector with 1536-dimension embeddings). Adopted MCP protocol for all external service connections (Notion, Google Workspace). Benchmarked multiple LLMs across OpenRouter, selecting GPT-5 Nano for optimal speed, cost, and tool-calling reliability. Added proactive intelligence (morning briefings, evening recaps, heartbeat check-ins) and voice I/O (Whisper + ElevenLabs).

Tools & Tech

Other

TypeScriptNode.jsGrammy (Telegram)OpenRouterGPT-5 NanoSQLiteSupabase (pgvector)MCP ProtocolOpenAI WhisperElevenLabs TTS

The Build Process

1

Research & Discovery

Analyzed architecture of emerging agent frameworks by studying GitHub repos, reviewed community implementations, evaluated security models of existing solutions (credential handling, system access, data storage), studied MCP protocol as a secure integration standard, and benchmarked multiple LLM models across OpenRouter for cost, latency, and tool-calling reliability

2

Architecture & Design

Designed Telegram-first interface for zero adoption friction, self-hosted architecture for full data ownership, 5-tier memory system (chat history, context pruning, soul identity, knowledge graph, vector embeddings), MCP-first integration architecture for all external services, and proactive intelligence layer with scheduled briefings and heartbeat check-ins

3

Build & Iterate

Built the full agentic reasoning loop with 17+ tools, Telegram interface with text and voice support, Notion and Google Workspace integration via MCP, multi-agent mesh workflows (coordinator, researcher, coder, reviewer agents), scheduled task system, webhook endpoints for external triggers, and the profile interview onboarding flow that personalizes the agent from day one

4

Validate & Refine

Validated through daily hands-on use as both developer and primary user. Confirmed reliability of core agentic loop, Notion document creation, email retrieval and synthesis, scheduled briefings, voice pipeline, and memory persistence across sessions. Currently investigating hallucination patterns, memory retrieval accuracy, and vector search threshold tuning

Learnings

“Security concerns with open-source agents are real — understanding every line of code that has access to email, calendar, and documents is non-negotiable. Memory is the hard problem: the agentic loop is relatively straightforward, but what makes an agent useful is how well it remembers context, surfaces relevant information, and avoids hallucinating memories. Model selection is a first-class design decision — benchmarking showed dramatic differences in tool-calling reliability, latency, and cost. Building an agent teaches more about agents than using one: debugging tool calls, tuning memory systems, and observing hallucination patterns was more valuable for the AI agency business than any course or tutorial. Start with fewer tools and add based on real need.”